Monitor page index status with Google Sheets, Apps Script and the Google Search Console API

Overview

Google just released URL inspection as part of the Search Console API. I check for issues periodically in Search Console but it would be great to just get an email when an issue crops up. The Apps Script project below does just that by monitoring URLs from your sitemap for changes and sending an email whenever anything is detected. The Search Console API has a limit of 2,000 calls per day and Apps Script also imposes a time limit on scripts. The approach I take below assigns a random day of the week to each URL to limit the number checked on each run. Depending on the size of your site you may want to remove this check (or go the other way if you have a large number of URLs to monitor). Follow the steps below to get your own monitoring spreadsheet up and running.

Google Sheet

Create a new spreadsheet in Google Drive and call it anything you want. Rename an empty sheet to 'gsc', this sheet will store the index data. You don't need to make any other changes to this sheet.

Choose Apps Script from the Extensions menu. This will open up the script editor for your spreadsheet. I find that sometimes the editor opens with the wrong account if you're signed into more than one. If that applies to you, check quickly to make sure the right account is selected. With Code.gs selected copy and paste the script below replacing the default function:

There are a few configuration variables to enter at the top. AlertEmail is the email address to notify when index status changes are detected. SitemapUrl is the full URL of your sitemap. The current implementation does not support sitemap index files, this needs to be a regular sitemap containing URLs. SearchConsoleProperty should be the URL of the site to monitor, or sc-domain: followed by the domain for domain properties (for this site sc-domain:ithoughthecamewithyou.com).

Click Project Settings (the cog in the left hand menu of the script editor) and copy the Script ID. Make a note of this for later.

API Console

Next we need to configure the Search Console API in the Google Cloud Platform Console.

- Create a new project (click the drop down to the right of 'Google Cloud Platform Console' and then New Project). Pick any name you like.

- Once the project is created, find APIs and Services in the left hand menu and choose Library.

- Search for Search Console, click on the Search Console API and then click Enable.

- A new screen will load, click Credentials in the left hand menu.

- Click Configure Consent Screen and choose the internal type.

- Fill in the required fields - application name and contact emails.

- Add the ./auth/webmasters.readonly scope for readonly access to Search Console data.

- Once the consent screen is complete click Credentials in the left hand menu again.

- Click Create Credentials at the top and choose OAuth Client ID.

- Choose Web Application.

- Add https://script.google.com/macros/d/{SCRIPTID}/usercallback to authorized redirect URLs, replacing {SCRIPTID} including the brackets with the Apps Script ID you noted above.

- Make a note of the Project ID.

Complete the Apps Script

Return to the Apps Script project and find the settings page. Set the GCP project to the project ID you noted above. Also on this page check the Show "appsscript.json" manifest file in editor option.

Go to the code editor and open appsscript.json. Add the following line:

"oauthScopes": ["https://www.googleapis.com/auth/script.external_request", "https://www.googleapis.com/auth/webmasters.readonly", "https://www.googleapis.com/auth/spreadsheets.currentonly", "https://www.googleapis.com/auth/script.send_mail"],

Make sure the script is saved and close the script editor window. Reload the spreadsheet. Once the reload completes you should have a Search Console menu at the top of the spreadsheet. Choose Update Data from the Search Console menu. This will run for a few minutes and then populate the 'gsc' sheet with your URL data. See UrlInspectionResult in the API documentation for more information about the meaning of each field. You should also get a lengthy email with a notification for each URL that was inspected. This will continue for the first week and then you'll only get updates for interesting status changes.

Scheduling

Now the project is working, open the script editor again (Apps Script from the Extensions menu) and open Triggers (the clock icon in the left hand menu). Click Add Trigger at the very bottom right of the window. Select runUpdate as the function to run. Change event source to time-driven, and then select day timer and an hour to run the script. Lastly click Save. Your Google Search Console monitor will now run every day, and if the index status of a page changes you'll get an email about it within a week. The spreadsheet will also come in handy for other analysis and reporting.

Troubleshooting

You might need to tweak the script if you start hitting limits. The URL inspection API currently has a 2,000 call / day quota. Apps Script will only run for around 7 mins on a free account and 20 mins if you have Google Workspace. If either of these limits apply you could modify the 'checkDay' logic to use day of the month (or year, or ...) to reduce the number of URLs inspected on each run. If you need to do this remember to update the Check Day column on the 'gsc' sheet as well.

The script assumes that the URLs you want to monitor are in your sitemap. If this is not the case you can add URLs to the sheet directly. As long as they are part of the configured property you will still get results. If you use this method you might want to comment out the updatePagesFromSitemap() call in runUpdate() to save time.

If anything else goes wrong please leave a comment below and I'll do my best to help you.

Updated 2022-02-08 17:40:

After a couple of days I have a full dump of my sitemap from the page index status API. I wrote this script for the alerting possibilities but couldn't resist some analytics once the dataset was complete.

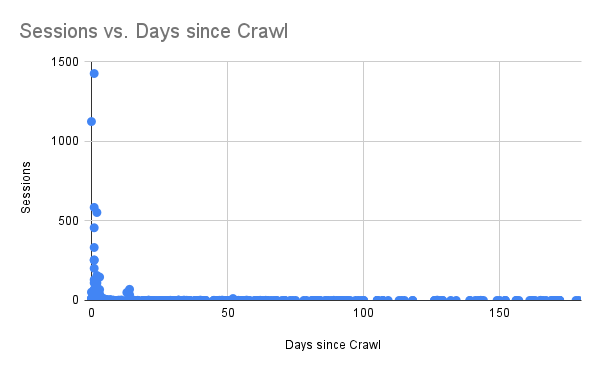

The chart above shows sessions vs days since the last Google Crawl. Pretty stark - Google keeps a close eye on the pages it sends traffic to and not so much on the others.

I set lastmod honestly and there is good news here. I could only find two cases where Google had not crawled the page since the last modified date. So when the sitemap says a page has changed the odds are good that it will get another crack at the index. The two exceptions are unusual posts that are updated hourly and weekly respectively and both have been crawled recently.

The breakdown of index status matches Google Search Console pretty well but I have a handful of pages that are 'Indexed, not submitted in sitemap', even though they are in the sitemap and no such status is shown on Search Console. I don't know if this is a glitch in the index status API or something to do with how the pages were discovered. Some light searching suggests that this message is usually what you would expect it to be.

Lastly, updating the sheet for my site is more bound by script execution time than the API limits. I changed it to run every hour and instead of partitioning by day of week I used a random hour of the day which means I check every URL at least once every 24 hours.

Updated 2022-04-24 10:50:

I just updated the code and post above. I've had occasional issues where updating the sheet failed which caused the next run to go back to the beginning with no saved index status. To reduce the chance of this happening I've added some retry logic and also improved the speed of the sheet load and save functions. I also had a comment on the code that suggested an easier way to handle OAuth and have incorporated this in the new version.

More Google Apps Script Projects

- Get an email when your security camera sees something new (Apps Script + Cloud Vision)

- Get an email if your site stops being mobile friendly (no longer available)

- Export Google Fit Daily Steps, Weight and Distance to a Google Sheet

- Email Alerts for new Referers in Google Analytics using Apps Script

- Animation of a year of Global Cloud Cover

- Control LIFX WiFi light bulbs from Google Apps Script

- How to backup Google Photos to Google Drive automatically after July 2019 with Apps Script

- Using the Todoist API to set a due date on the Alexa integration to-do list (with Apps Script)

- Automate Google PageSpeed Insights and Core Web Vitals Logging with Apps Script

- Using the Azure Monitor REST API from Google Apps Script

(Published to the Fediverse as: Monitor page index status with Google Sheets, Apps Script and the Google Search Console API #code #searchconsole #appsscript #google #sheets #drive #seo How to use Apps Script in Google Sheets to automatically monitor index status in Google Search Console and get an email alert if anything changes. )

Google search-for-your-own-verified-sites Console

I don't know about you, but when it comes to Google Search Console I spend about 0.01% of the time adding sites and 99.99% analyzing existing ones. And yet when signing into Search Console with many verified sites the interface is ALL about adding a new one. Maybe 10% of the UX would be reasonable but it looks for all the world like I have nothing added.

To get to my sites I need to click the hamburger. Come on Google, being mobile first doesn't have to mean being desktop hostile.

Clicking the hamburger isn't even enough. This just brings up a practically blank sidebar. I then need to expand the 'Search property' drop down. Finally I get a needlessly scrolling list of my sites.

Related Posts

- Monitor page index status with Google Sheets, Apps Script and the Google Search Console API

- Google PageSpeed Insights hates Google Analytics

- Chiroopractoor

You Might Also Like

- Stamp out B2B spam with an evil calendar

- Open letter to Nancy Pelosi

- What do you get when you multiply six by nine? Brexit.

(Published to the Fediverse as: Google search-for-your-own-verified-sites Console #marketing #google #searchconsole Why do I need to click a hamburger AND drop down a menu to get to a list of verified sites in Google Search Console? )