Using the Azure Monitor REST API from Google Apps Script

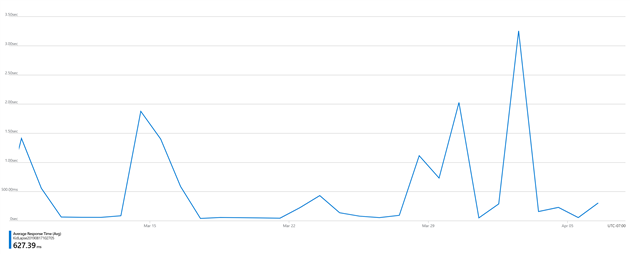

This post describes how to get metrics (in this case average response time) from an Azure App Service into a Google Sheet. I’m doing this so I can go from the sheet to a Data Studio dashboard. I already have a report in Data Studio that pulls from Ads, Analytics and other sources. I’d rather spend hours adding Azure there than be forced to have one more tab open. You might have different reasons. Read on.

- Create a Google Sheet and give it a memorable name. Rename the first sheet to AvgResponseTime and put ‘Date’ in A1 and ‘Average Response Time’ in B1.

- Create a script (Script editor from the Tools menu) and give that a good name as well.

- In the script editor pick Libraries from the Resources menu. Enter 1B7FSrk5Zi6L1rSxxTDgDEUsPzlukDsi4KGuTMorsTQHhGBzBkMun4iDF which is the Google OAuth library, pick the latest version and click Save.

- Select Project properties from the File menu and make a note of the Script ID.

- Log into your Azure Console and then go to https://resources.azure.com/. You are looking for a metricdefinitions node for the resource that you want to monitor. In my case it’s subscriptions / MyName Subscription / resourceGroups / providers / Microsoft.Web / sites / MySite / metricdefintions. Browse through this list to find the id of the metric you’re interested in. For me it’s AverageResponseTime. Finding this was the hardest part. Microsoft’s documentation for resourceUri is literally ‘The identifier of the resource.’ Why even bother Microsoft? Make a note of the id and remove the ‘metricDefinitions/AverageResponseTime’ from the end, because of course the ID isn’t quite right for some reason. Mine looks something like this: /subscriptions/mylongid/resourceGroups/mysomethingResourceGroup/providers/Microsoft.Web/sites/mysiteid

- Go back to the Azure Console and open Azure Active Directory. Select App registrations under Manage and create a New registration. Time to come up with another name. You probably want ‘Accounts in this organizational directory only’. The redirect URL is https://script.google.com/macros/d/SCRIPTID/usercallback - replace SCRIPTID with the Script ID you made a note of in step 4.

- Click the View API permissions button, then Add a permission and then pick Azure Service Management. I’m using Delegated permissions and the user_impersonation permission. Then click Grant admin consent for Default Directory.

- Go to Certificates & secrets (under manage) and create a new client secret. Make a careful note of it.

- Go to Authentication (under Manage), check Access tokens under Implicit grant and then click Save at the top of the page.

- Go to Overview and make a note of your Application (client) ID and Directory (tennant) ID.

- You are getting close! Go to the script editor (from step 2) and paste in the code at the bottom of this post. There are four variables to enter at the top of the script. ClientID and TennantID are from step 10. ClientSecret is from step 8. ResourceID is from step 5. Save the script.

- Reload the spreadsheet (back from step 1). You should get an Azure Monitor menu item. Choose Authorize from this menu. Google will ask you to authorize the script, do this for the Google account you’re using. Choose Authorize again, this time a sidebar will appear with a link. Follow the link and authorize against Azure (if you’re logged in this might just go directly to success). If you get authorization errors in the future run this step again. If that does help use Reset Settings and then try again.

- You should be ready to get data. Choose Fetch Data from the Azure Monitor menu. If this doesn’t work check through steps 1-12 carefully again!

- Last step - automate. Go back to the script editor. Choose Current project’s triggers from the Edit menu. Add a trigger (the small blue button hidden at the bottom right of the screen - not everything needs a floating action button Google!) to run fetchData daily at some reasonable time.

More Google Apps Script Projects

- Get an email when your security camera sees something new (Apps Script + Cloud Vision)

- Get an email if your site stops being mobile friendly (no longer available)

- Export Google Fit Daily Steps, Weight and Distance to a Google Sheet

- Email Alerts for new Referers in Google Analytics using Apps Script

- Animation of a year of Global Cloud Cover

- Control LIFX WiFi light bulbs from Google Apps Script

- How to backup Google Photos to Google Drive automatically after July 2019 with Apps Script

- Using the Todoist API to set a due date on the Alexa integration to-do list (with Apps Script)

- Automate Google PageSpeed Insights and Core Web Vitals Logging with Apps Script

- Monitor page index status with Google Sheets, Apps Script and the Google Search Console API

(Published to the Fediverse as: Using the Azure Monitor REST API from Google Apps Script #code #azure #appsscript #gas #google #microsoft How to call the Azure Monitor REST API via OAuth from Google Apps Script. Worked example shows how to log average response time for an Azure App Service. )

Comments

Thank you Robert for you answer. I'm doing this in apps script. I found 2 points to correct:

- Api permissions were ok, but I missed the last part of line 21 of your code: 'offline access'

- I also added after line 21 this code:

if (!service.hasAccess()) {

service.refresh();

}

Now it works as desired.

Thank you again for the hint!

Hi Daniele, are you doing this in apps script or something else? You need to request offline access, save the refresh token and then this can be used to update access as needed. If you're using a different language you'll need to look at the OAuth implementation to see how this is handled. If it's apps script based on the above code and not working then possibly something to do with step 7 above. Also sometimes worth revoking access completely and then reauthorizing.

Thank you Robert for this code. I need something different, but with small changes I got what I need.

One question, if you can. Every time I'm asked to grant permissions via graphical consent (new browser tab). I'm setting up an app that runs in batch every night, so I'd like to give consent on the first time it runs, and then no more need to grant permission.

I don't know exactly how to do this with Microsoft Cloud Identity: any hint?

Thank you in advance.

Thanks Robert! Your oath2 code for Azure and guide for the setup was just what I needed! In my case I used it to trigger an Azure Data Factory (timed triggers were going to cause frustration for my users, on demand trigger will be much better!). The scenario / workflow for us is:

On premise SQL timecard data >

User triggers Azure Data Factory pipeline run to synchronize on-prem & Azure SQL using the google sheet (and thanks to your code!) >

User maps time card coding to contract items in the sheet >

Dynamically create SQL query within the sheet based on job / contract mapping>

Pass SQL query to Azure, return results to sheet >

Gscript function exports CSV results to GDrive in blocks of 15 lines (limit on Fed provided PDF import form) >

User imports CSV data file into PDF form (need to work on that automation still!)

Finding a way to authenticate with Azure AD to be able to trigger the pipeline manually was key. Your guide and code were well done and easy to follow and adapt. Thanks again!

Pat

Add Comment

All comments are moderated. Your email address is used to display a Gravatar and optionally for notification of new comments and to sign up for the newsletter.